On December 16, 2024, Natalie Rupnow (also known as Samantha) carried out a school shooting at the Abundant Life Christian School in Madison, Wisconsin, resulting in three fatalities—including Rupnow herself— and six injuries. A few weeks later on January 22, 2025, Solomon Henderson conducted a school shooting at Antioch High School in Nashville, Tennessee, that resulted in two fatalities, including Henderson, as well as an additional injury.

In the aftermath of these shootings, the ADL Center on Extremism conducted an analysis of Rupnow and Henderson’s online footprints and discovered that both spent substantial amounts of time in spaces that featured extremist ideologies and violent content. The following timeline provides an overview of Rupnow’s and Henderson’s online activity, highlighting incidents that showcase their engagement with violent and extreme online communities.

In addition, below the timeline are resources from COE on WatchPeopleDie, white supremacist efforts to recruit young people and 764.

More About Youth Violence & Extremism

From Gore to Hate: How “WatchPeopleDie” Serves as a Gateway to Extremism

Two school shootings, just weeks apart in late 2024 and early 2025, were united by a common thread: the violent gore website WatchPeopleDie.

Raised for the Reich

White supremacists have a long history of trying to recruit youths to join their movement as a means of filling their ranks and maintaining relevancy.

764

Hidden behind screens, members of the 764 network groom and trick minors through sextortion, swatting and abuse - all to gain clout online. While some are extremists, most are driven by nihilism and a desire for status and fame.

Make the Internet Safer

Congress hasn't updated our internet safety laws in nearly 30 years. Join ADL in demanding our lawmakers take action to protect users from dangerous online content.

Additional Resources

Educate yourself; share these resources with friends, family and local educators; talk to the kids in your life and if you see something, seek help.

Expand Investments in Community Based Violence Prevention

The tragic lessons learned from Rupnow and Henderson’s school shootings should not be ignored. Their engagement with and posting of violent content were among many missed opportunities to introduce targeted interventions to steer them from their pathway to violence. Yet programs that offer such “off-ramps” from extremism remain dangerously underfunded and unevenly distributed across the country.

The Department of Homeland Security’s Center for Prevention Programs and Partnerships (CP3) provides grants to community organizations aimed at preventing violence before it starts, especially among youth drawn into violent ideologies. These programs are not only cost-effective but also increasingly important in an environment in which minors are becoming perpetrators of ideologically motivated violence. There are few existing law enforcement options or legal recourses to address this growing phenomenon. Law enforcement lacks the tools, bandwidth, and legal framework to intervene early enough.

Moreover, CP3 can play a key role in keeping antisemitism from becoming antisemitic violence. Community-based prevention programs can foster resilience, inoculate against hate, and deescalate extremist pathways before they result in harm.

To be most effective, CP3 must be strengthened, not sidelined. All grants and actions by CP3 must be married with increased transparency and improved program evaluation. Now is the time to stop hate before it turns deadly.

Reduce the Spread of Extreme Online Violent Content

The horrific events around the school shootings perpetuated by Rupnow and Henderson clearly show the immense impact extreme online violent content can have on users, and how exposure to such content may inspire deadly violence. Lawmakers need to make a concerted effort to ensure digital social platforms, such as social media and online gaming companies, are held accountable. These incidents underscore the urgent need for policymakers to take action to ensure this content does not cause future harm.

- Hold tech companies accountable for harms they cause: Almost thirty years ago, lawmakers put into place regulations and rules that still govern what content tech companies can be held liable for on their platforms. Over the past three decades, the internet has evolved tremendously, and these laws are no longer suitable to meet all the challenges we currently face. Congress should update the outdated internet governance regime to reflect the realities of the often-harmful business models of modern internet and tech companies. Given how social media platforms’ own tools and policies often exacerbate hate, harassment, and extremism, Congress must incentivize companies to protect users proactively. This should include revising Section 230 of the Communications Decency Act to create specific exceptions to liability protections for content that is algorithmically amplified, especially when such content poses a risk to public safety. These changes should be made in a manner that enables platforms to moderate hate, disinformation, and harassment under their terms of service and First Amendment rights.

- Enhance Transparency Regarding Content that Incites Violence: Whether it is malign users trying to replicate real-life mass shootings on gaming platforms or the people motivated to commit horrific antisemitic attacks in Washington, D.C., and Boulder, Colorado, online content clearly plays a role in driving real-world harm. Policymakers must work to ensure online companies outline or establish clear terms of service that cover what content on their platforms constitutes items that could incite violence, and push transparency standards, including disclosures of how these organizations are enforcing their terms of service, to ensure these companies provide data on what they have or have not done to remove content that could be interpreted to incite violence.

- Create Barriers to Prevent Malicious Actors from Livestreaming Graphic Online Content: Following the horrific 10/7 Hamas terrorist attacks, ADL provided recommendations to help online companies ensure platforms cannot be weaponized to exacerbate violence, spread misinformation, and perpetuate trauma and emotional harm through livestreaming. While livestreaming is an important tool for both entertainment and informational purposes, it can also lead to graphic online content being shared more rapidly, such as the Tops Buffalo Shooting and the attempted livestreaming during the Antioch, Tennessee, school shooting. To ensure these tools are not used for malicious purposes, policymakers should consider implementing the following policy initiatives for online companies to ensure users are not unduly exposed to graphic violent content and to reduce the spread of such content.

a. Implement Verification and Benchmark Requirements: Require companies to ensure that users meet specific benchmarks, such as account verification and follower thresholds, before gaining the ability to livestream without restrictions. This approach ensures that only trusted users can broadcast content, reducing the risk of anonymous individuals spreading livestreamed violence.

b. Gradual Access for New Streamers: Mandate that companies limit the number of viewers for first-time or relatively new streamers. Increase access gradually based on adherence to community guidelines, ensuring that users demonstrate responsible behavior before reaching larger audiences.

c. Restrict Livestream Links from Problematic Websites: Prohibit companies from hosting livestream links from websites known for hosting extremist and terrorist content. Redirect users attempting to access livestreams from such sites, preventing the amplification of violent content through external platforms.

d. Introduce Broadcast Delays for Unverified Users: Require companies to utilize tools to create significant delays in broadcasts for unverified users, providing moderators and AI tools sufficient time to review content and take action if it violates terms of service or involves unlawful behavior.

c. Invest in Advanced Moderation Tools: Encourage platforms to invest in tools that continuously monitor and capture violative livestreaming content. Content moderation should be proactive and ongoing, rather than reactive to national tragedies.

State & Local Policy Recommendations

- Prevent Radicalization in Online Spaces: States should take proactive measures to prevent radicalization through online platforms, by implementing programs and passing legislation that hold tech companies accountable for the spread of extremist content and promote transparency in content moderation. This includes further programming to foster digital literacy and critical thinking skills, empowering individuals to recognize and resist online recruitment efforts, extremist ideologies, and misinformation.

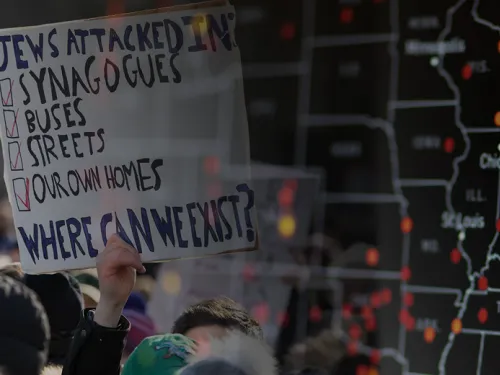

- Address Real-World Manifestations of Online Hate: Given the horrific events we have unfortunately seen time and time again, it is clear that online hate can lead to real-world harms. To better protect communities from these incidents, states should implement robust nonprofit security grant programs to protect vulnerable communities and pass hate crime laws, including legislation that combats the distribution of flyers and other hate materials, which can lead to the radicalization of young individuals.

- Enhance Education and Awareness in Schools: States should implement programs and pass legislation that lean on best practices for combating antisemitism and integrating Holocaust education throughout a student's educational journey, as well as implementing comprehensive anti-bias education and providing professional development for educators to recognize and address antisemitism and other forms of hate. By fostering inclusive school environments and promoting understanding and respect for diverse cultures and religions, states can help build resilience against extremist ideologies and prevent radicalization.